Enterprise Clients & Domain

Successful B2B deliveries across enterprise and government clients. Currently building LLM-based AI agents at Pevo (healthcare & insurance), with prior NLP-based chatbot delivery to Hyundai Motor Group, Amore Pacific, Gangnam District Office, and DA Plastic Surgery.

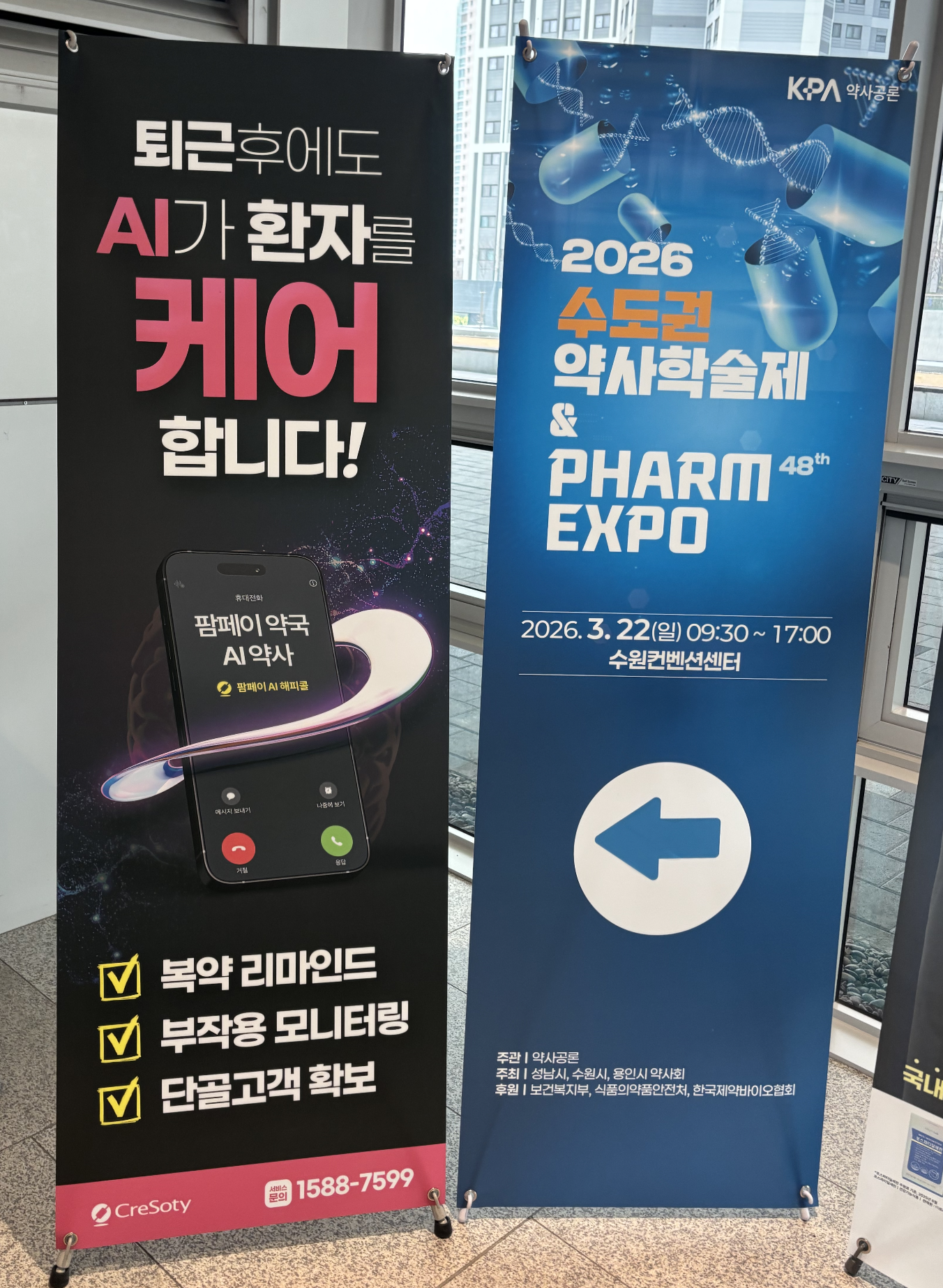

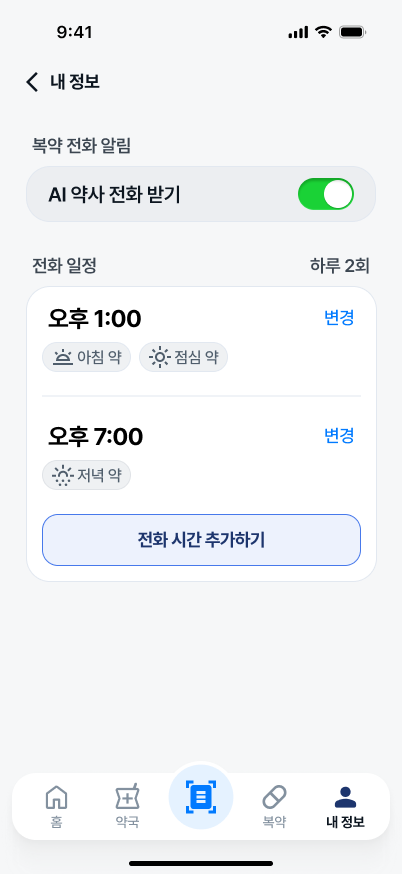

Cresoty (크레소티)

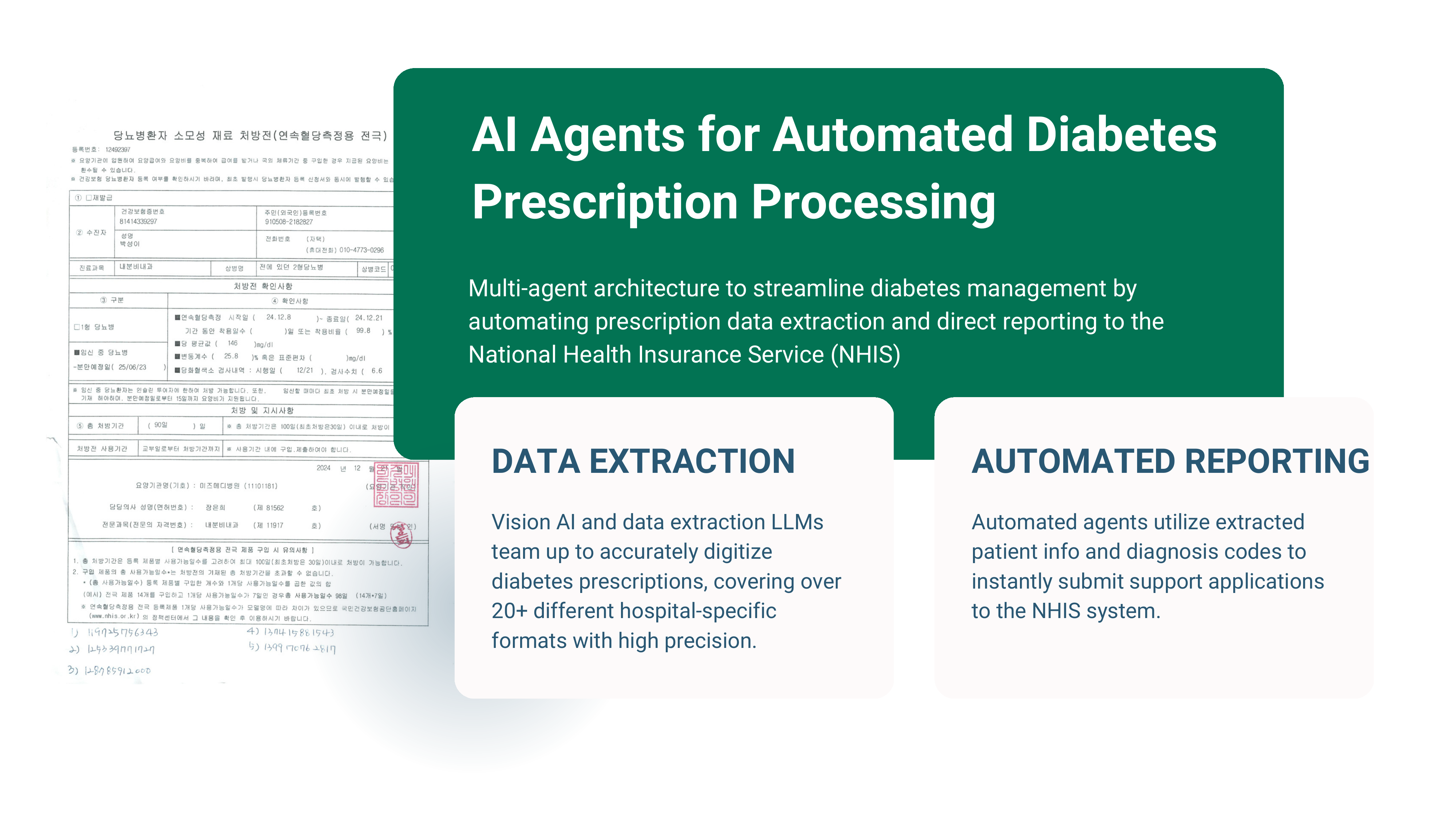

Korea's #1 pharmacy POS platform — 15K of the country's 25K pharmacies (~60% market share) run on Cresoty's infrastructure. Prescription AI agent (Agent 02) deployed on their platform. Voice AI agent (Agent 03) currently in the supply pipeline, targeting rollout across their pharmacy network.

Voice AI — Supply Pipeline

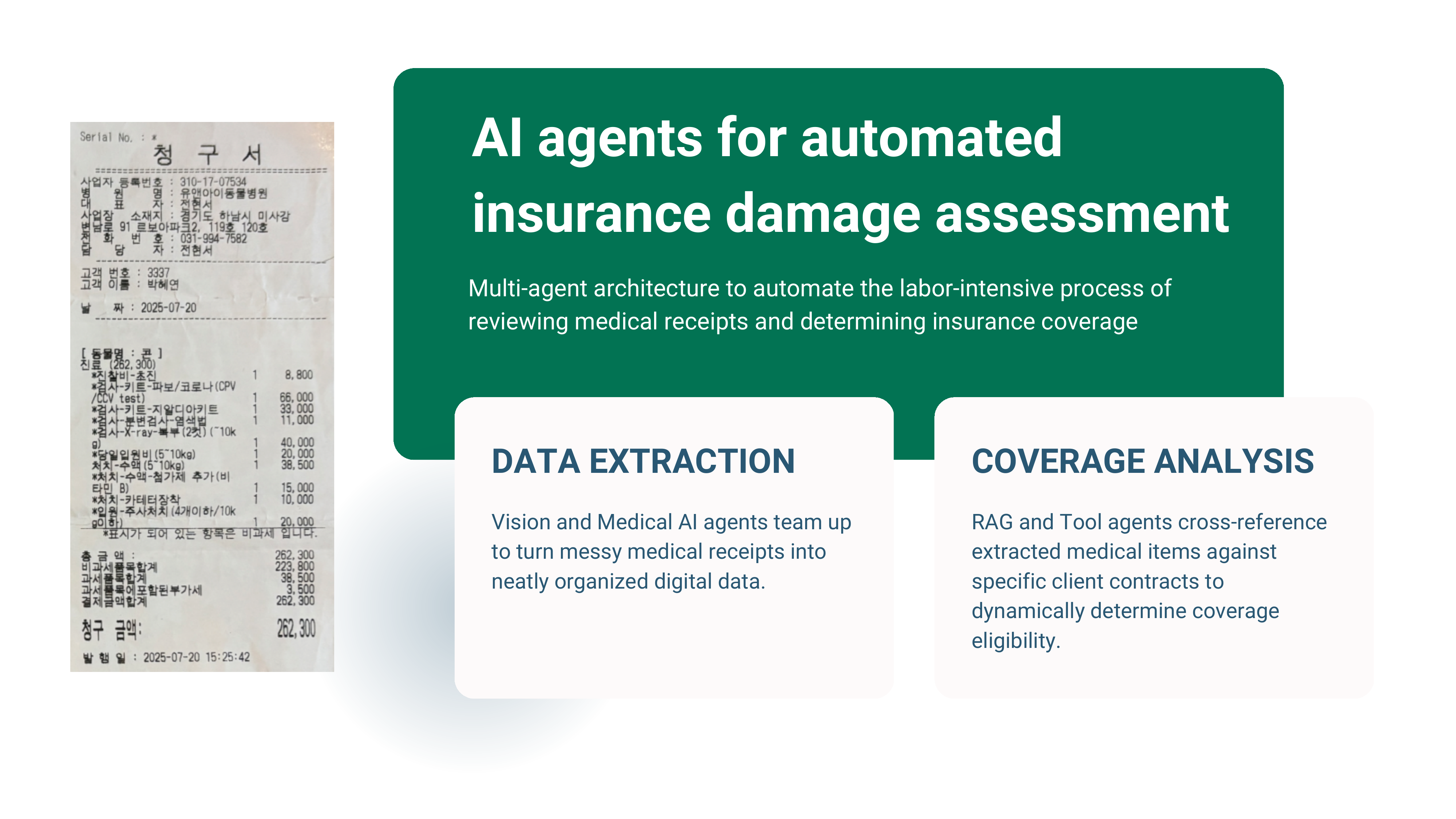

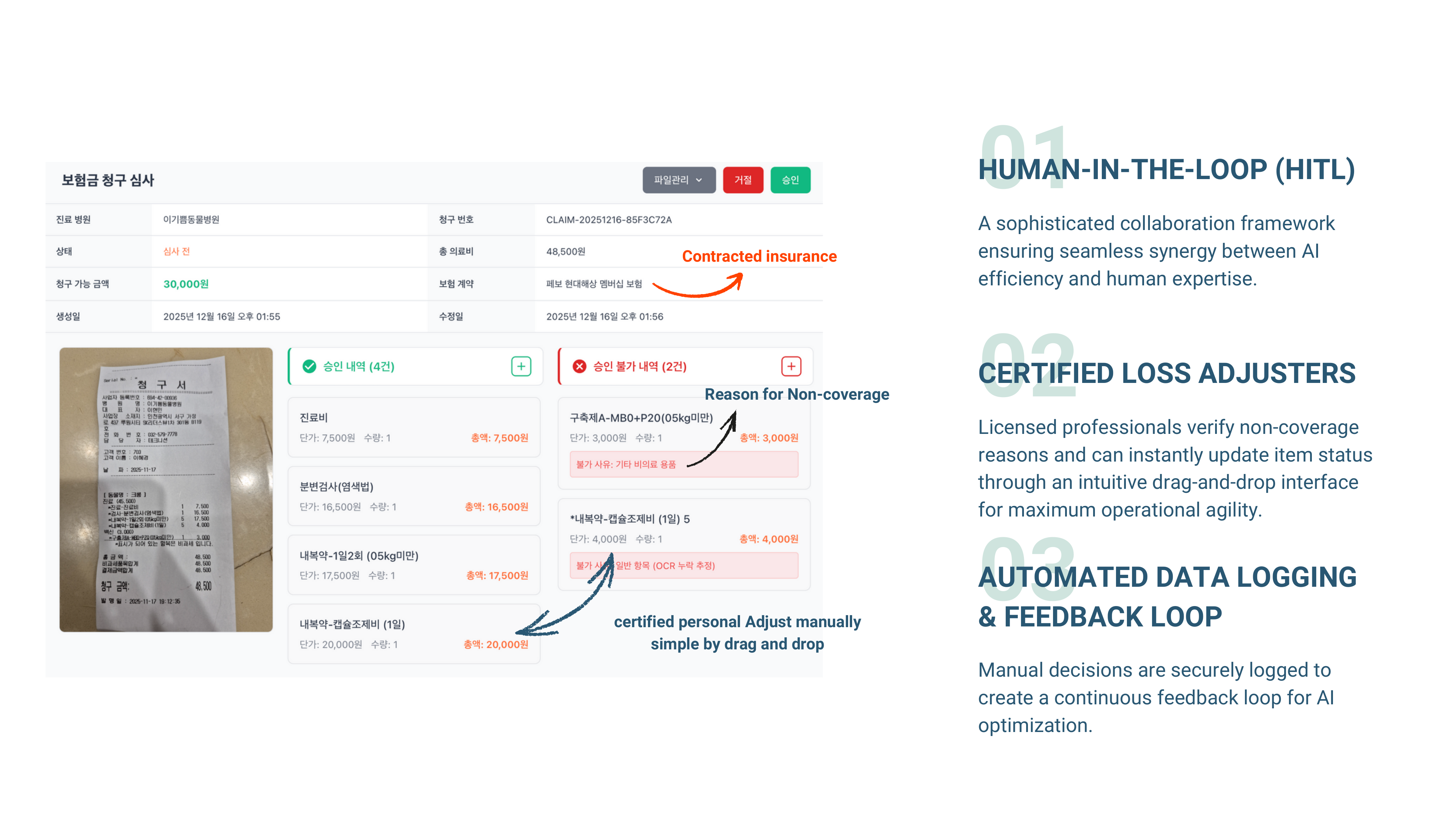

KB Insurance (KB 손해보험)

One of South Korea's largest insurers. Conducted a proof-of-concept for automated insurance claim damage assessment using multi-agent AI architecture with human-in-the-loop verification.

LLM-based AI Agent

Hyundai Motors (Hyundai AutoEver)

Built and delivered AI chatbot system for Hyundai Motor Group via their IT subsidiary Hyundai AutoEver. Kona test-drive reservation chatbot with end-to-end conversational flow.

NLP-based Chatbot

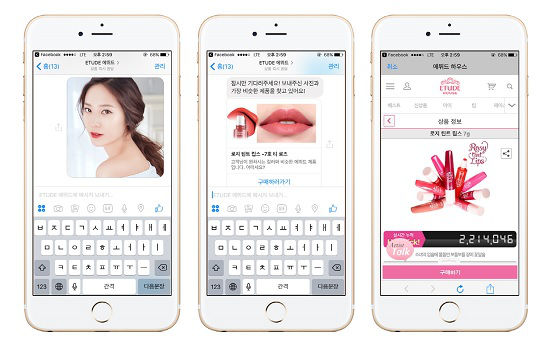

Amore Pacific (Etude)

AI-powered cosmetics recommendation chatbot for Etude House, a global beauty brand under Amore Pacific — South Korea's largest cosmetics conglomerate.

NLP-based Chatbot

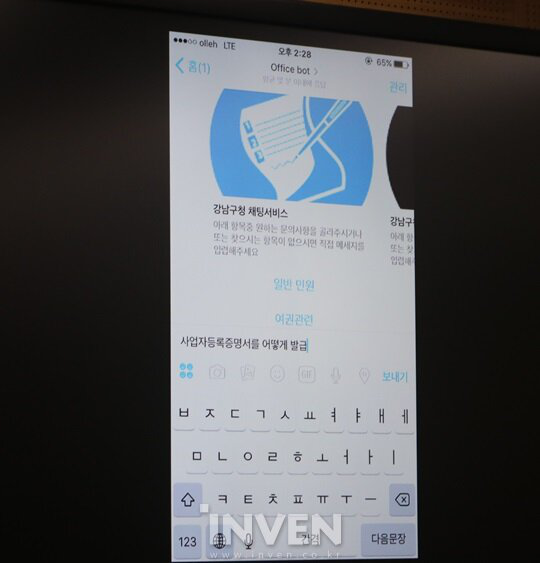

Gangnam District Office

Public service AI chatbot for Gangnam District — yes, that Gangnam from PSY's "Gangnam Style." One of Seoul's most prominent districts, serving residents with automated civic inquiry handling.

NLP-based Chatbot

DA Plastic Surgery (디에이성형외과)

AI consultation chatbot for one of Gangnam's largest medical groups — 42+ specialist doctors across plastic surgery, dermatology, hair transplant, and anti-aging centers.

NLP-based ChatbotMy Role: CTO & Forward Deployed Engineer

Led all technical decisions across healthcare and insurance AI systems at Pevo. Prior enterprise track record detailed below.

Enterprise AI in Production — Before Pevo

Enterprise B2B AI Chatbots (Mindset, 2016–2018)

Built and operated AI chatbot systems for major Korean enterprises: Amore Pacific (Etude House cosmetics recommendation), Hyundai Motor Group (Kona test-drive chatbot), and Gangnam District Office (public service inquiries). Managed end-to-end delivery including regulatory compliance, data security, and production operations.

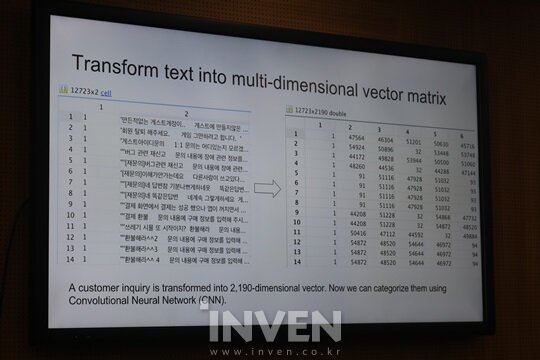

IGC Conference Speaker — AI Customer Service

Presented "AI Platforms for Game Customer Service" at Inven Game Conference 2016 (IGC), one of Korea's largest gaming conferences. Demonstrated NLP-based customer inquiry classification using multi-dimensional vector transformation — the same foundational approach that later evolved into today's LLM-based agent systems.